BIC Process Design

Understand & Transform

Supercharge your business operations with the most intuitive AI-powered BPM software.

It seems that you come from a German speaking country. Here you can change the language

Englishby Claudia Howe, GRC Competence Lead at GBTEC Austria (formerly avedos)

In my work as a consultant, I had blocked out overly quantitative methods including simulation for years. My reasoning? They were too complicated, unclear and difficult to understand across the entire organization, from risk evaluators to executives. They portrayed a sense of accuracy that didn’t exist. The work involved was in no relation to the added value – and what added value by the way? The better alternative was to build the foundation, gain acceptance, and firmly establish awareness for risks in the corporate culture.

That wasn’t laziness or reluctance on my part to delve deeper into that area. It was merely a conviction of mine. And now? All at once, clients want to work quantitatively – and not only those in typical numbers-focused environments. They want to use simulations to answer specific questions. Does that mean I have to toss my beliefs aside?

Let’s first take a look at the typical requirements placed on us as a tool vendor.

We have observed that making evaluations on a purely qualitative scale and then simply multiplying them by the probability of occurrence and potential damages no longer suffices for many companies. More organizations are taking the route of quantitative evaluations, sometimes even over multiple observation periods and usually in preparation for various types of simulations.

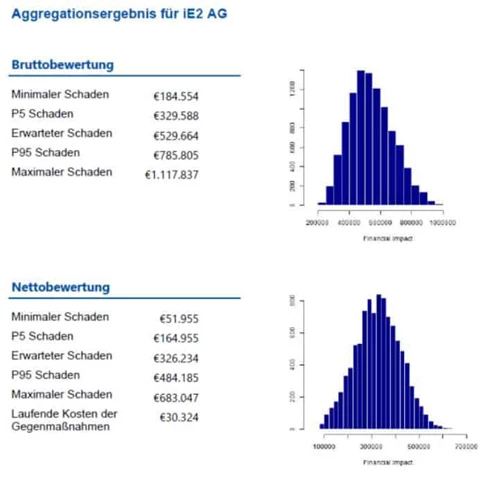

A simulation, in this context, means testing different what-if analyses at random until a most likely variant stands as a result. When it comes to simulation, Monte Carlo is typically the method of choice, with the objective to aggregate each evaluated expected value of the individual risks into a statement on the total risk situation and determine which value will be achieved with a 95% probability. One request that we often receive is the ability to apply different distributions and account for dependencies among the risks. At this point, a first discussion often ensues with the business departments to define correlation and causality as well as the underlying reasons for this requirement.

Most requirements revolve around analytic capabilities and visualization forms. Over time, for example, the idea to link the aggregated effects with the respective positions in planning or the consolidated annual financial statements often takes root. Another common request is to determine the percentage of a certain risk category in comparison to the total result. Relevant graphical visualizations such as distribution curves or a tornado chart are also listed among the functional requirements.

We could have implemented all of these requirements in the past. Instead, we opted to make these changes within our core product, the BIC GRC Solutions (formerly risk2value) in order to provide faster, easier responses to these and other client requests in the future.

We now support the complete process of risk management – from quantitative evaluations and simulation, to aggregations and input, to activity optimization – fully integrated and directly within the BIC GRC Solutions. The following components are planned for use:

1. Template for quantitative risk evaluation

2. Template for recording actions

3. Methods for aggregating individual risks into a total risk position in R

4. Methods for correlating risks

5. Methods to determine actions by weighing their costs and benefits

The first experiences from client projects confirm a strong acceptance for our new approach. Our clients have been highly satisfied thus far with both the fulfillment of functional requirements as well as the calculation speed and analyzability.

Fotocredit (c) Stefan Heigl / RiskNET GmbH

The benefits of quantitative methods are easy to summarize:

Nonetheless, there is still good reason for doubts and skepticism. This is fueled by a few reported cases in which the quantitative methods were ultimately pulled at the end. In these instances, however, there was simply too much debate regarding the accuracy and interpretation of the methodological/mathematical/statistical approach and confusion surrounding the individual results. That obviously leaves even less time to discuss ways to deal with future risks.

That brings me back to where I started. In my opinion, the slow development to quantitative-driven methods is the logical result of the overall improvements in the maturity of risk management throughout a company. The respective maturity level is just as critical now as it was in the past, and all initiatives for the further development of risk management must be well planned and implemented in the right dosage.

Quantitative methods and simulation methods can both be incorporated and, in my opinion, require a certain (higher) level of maturity. Without it, these elements cannot lead to the desired effects and benefits. Due to the nature of simulation methods, a clean, mature, reliable data quality is of utmost importance. If certain factors such as a common risk language, a profound risk awareness in both the corporate culture and everyday actions as well as the attention of top management for these topics do not exist, the business departments must first set their focus here in order to create this vital base.

Therefore, I still hold true to my conviction. Don’t quantify things just for the sake of quantifying them or being formally correct – but rather to actively practice and advance risk management in line with your organization’s current level of maturity. What do you think? Quantitative methods leave much room for discussion. What is your opinion? I look forward to exchanging thoughts with you!

A professional GRC strategy builds the foundation for successful business management. BIC supports you with a unique combination of the latest technology, an intuitive user interface and fast implementation. That makes working with the BIC GRC Solutions so easy - in all GRC areas.